AI inherits human permissions but skips their judgment.

AI inherits human permissions but skips their judgment.

This is Blog 3 in our Data Trust + AI Success Blog Series

- Why seeing AI risk isn’t enough to protect you from it

- Security by obscurity just died. AI killed it.

- Why AI doesn’t behave like a human

- Why most AI projects are failing

- Why CISOs need a seat at the AI design table

In most enterprise security programs, it’s quietly assumed that the actor on the other side of a control is human. A person who can be trained, who will hesitate and, even with broad permissions, will exercise some natural judgment about what to open, what to share and what to leave alone.

In MIND's research, The Impact of Data Trust on AI Success, security leaders kept naming the same problem from different angles. The actor most of their controls were built for isn't the actor that's now moving through their data estate. AI doesn't pause. AI doesn't filter. AI doesn't decide that something isn't relevant before it surfaces it.

What matters: AI inherits broad permissions without human judgment. Every data security control gap is now open to something that doesn't hesitate.

Why don't enterprise security controls work on AI?

Every security control, policy and enforcement mechanism in the enterprise was designed with people in mind. Humans move at human speed. They can be trained, audited or held accountable. Even privileged users with broad permissions exercise some natural sense about what they share and when. A finance director with full access to compensation data still doesn't open every file in the folder.

AI agents inherit the same permissions but behave differently. They don't pause. They don't filter. They don't apply judgment to what they surface or use. When an agent connects to a data source, it finds everything within reach, not just what's relevant.

The frameworks built around human behavior have no native language for what AI is doing.

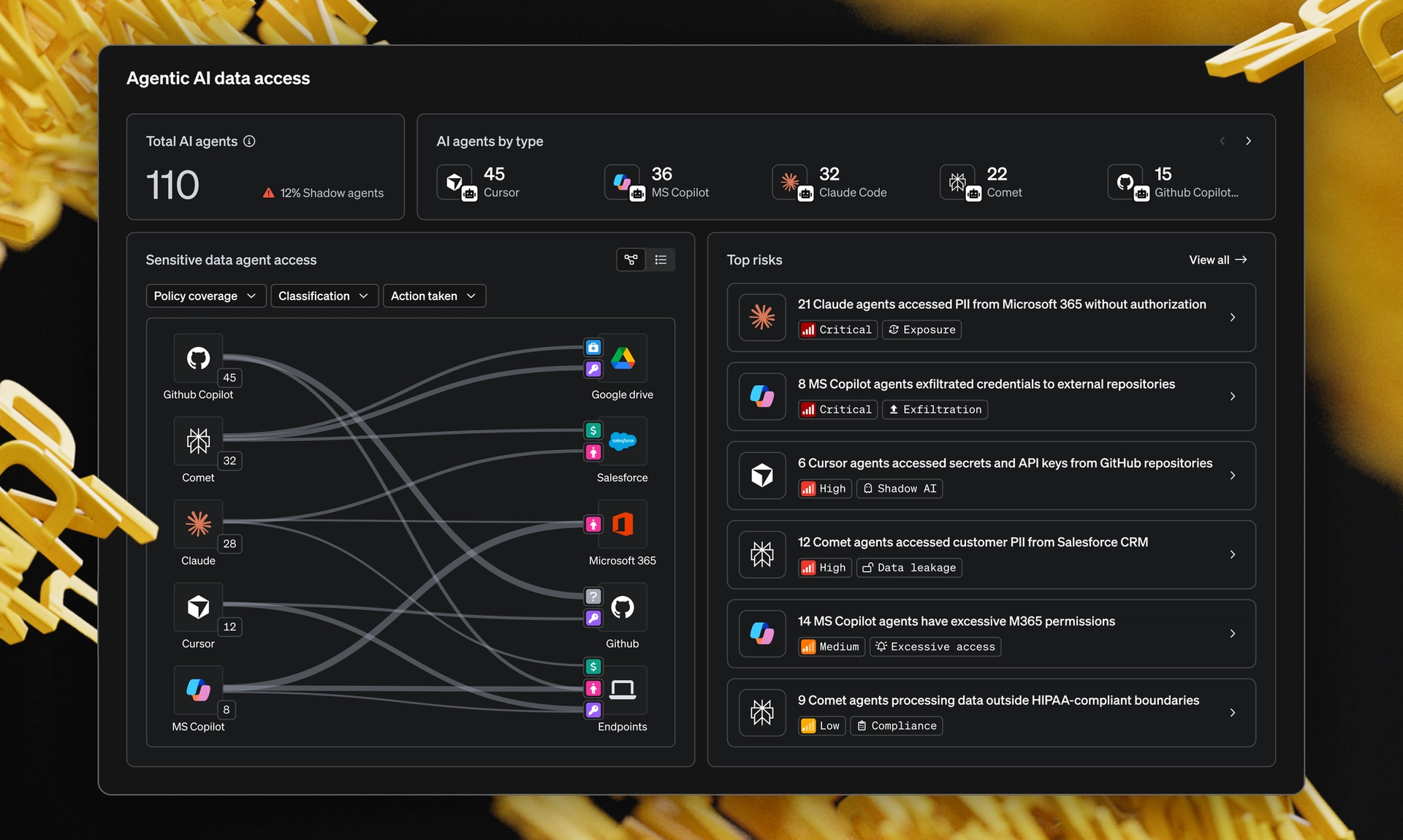

How widespread is the non-human actor problem?

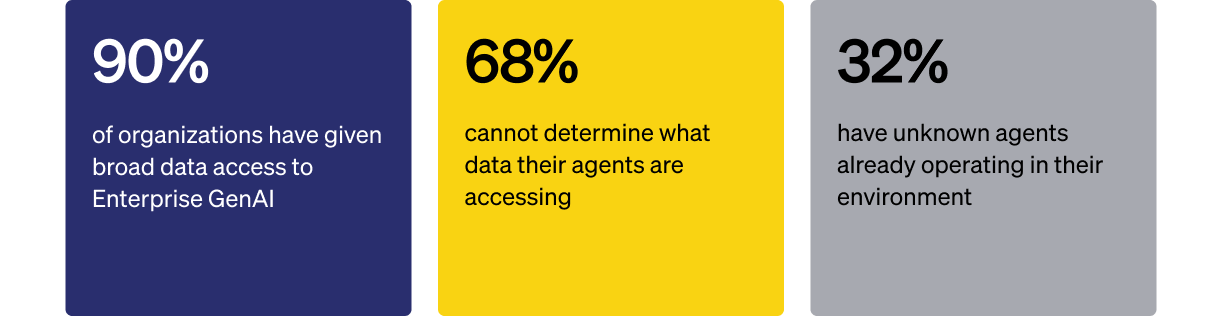

Wider than most security programs are prepared for. From MIND's research:

That last number is the one most CISOs in the research lingered on. A third of organizations already have agents inside their environment that nobody on the security team has inventoried. Those agents are reading, summarizing and acting against data using credentials inherited from and written for humans.

The exposure isn't theoretical. It's already running.

What does this look like inside a real organization?

One scenario from the research keeps coming up in CISO conversations. A team member submitted a set of internal documents to a consumer AI tool for analysis. The tool's default settings permitted model training on submitted content. The data had left the building and no one knew where it went.

There's no signature here. No alert pattern. No malicious actor to investigate. Just an employee using a tool that did exactly what it was supposed to do, against a policy framework that assumed the employee would be the one deciding what to share. The judgment layer was missing because the framework never expected it to be needed.

Data estates that were never fully classified become comprehensively and immediately exposed the moment an agent is pointed at them. The exposure isn't created by AI. It was already there. AI is what makes it visible at machine speed, to systems that don't apply human judgment about what they should or shouldn't surface.

What does data security at AI speed actually require?

A different mental model for who you're protecting against. The actor inside your data estate is no longer always a person. Sometimes it's an inherited credential being driven by a model. Sometimes it's an agent your IT team approved last quarter. Sometimes it's a sanctioned LLM running against a SharePoint that was never classified.

This is where MIND focuses. MIND isn't just enforcing the rules you already had on human users. It's minding your data, whether or not the actors using it are human. That means classifying what your AI tools can reach, governing what they can do once they're connected and surfacing the agent activity that's already happening inside your environment so your team can act on what matters and leave the noise behind.

The work ahead is building a data foundation that can be governed at the speed of AI.

Where do CISOs go from here?

This is one finding from a larger pattern. The full report walks through all seven insights, with direct CISO quotes and a clear set of recommendations for security leaders trying to govern AI without slowing their business down.

Read The Impact of Data Trust on AI Success to see how high-performing teams are designing data security for actors that don't behave like humans.

Data Trust + AI Success Blog Series

- Why seeing AI risk isn’t enough to protect you from it

- Security by obscurity just died. AI killed it.

- Why AI doesn’t behave like a human

- Why most AI projects are failing