Visibility into AI risk is everywhere. Real time enforcement still isn't.

Visibility into AI risk is everywhere. Real time enforcement still isn't.

This is Blog 1 in our Data Trust + AI Success Blog Series

- Why seeing AI risk isn’t enough to protect you from it

- Security by obscurity just died. AI killed it.

- Why AI doesn’t behave like a human

- Why most AI projects are failing

- Why CISOs need a seat at the AI design table

- Why AI is a stress test of your security fundamentals

Most security teams know where AI is showing up. They've inventoried the tools and written acceptable-use policies. That's real progress. It's also given a lot of teams a false sense of control.

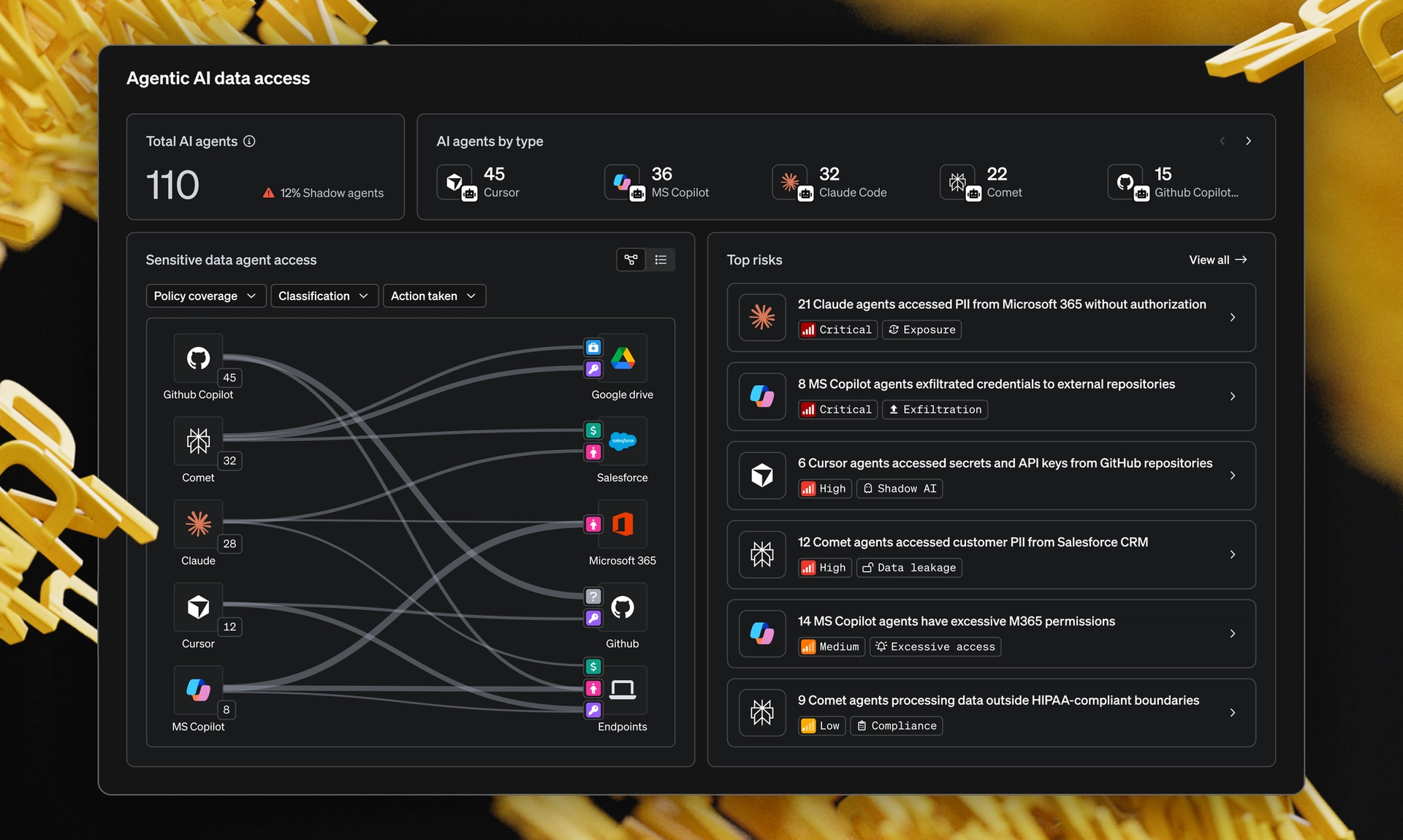

In MIND's research, The Impact of Data Trust on AI Success, one insight kept surfacing across CISO interviews: there's a consistent gap between visibility and enforcement. Teams know what's happening. They can't reliably control it at the moment it matters.

What matters: Organizations can see AI risk. They can't enforce policy at AI speed.

Where does AI enforcement actually break down?

The issue isn't awareness. Security teams have already invested in governance, policy design and visibility across GenAI tools and agents. Most know what good looks like.

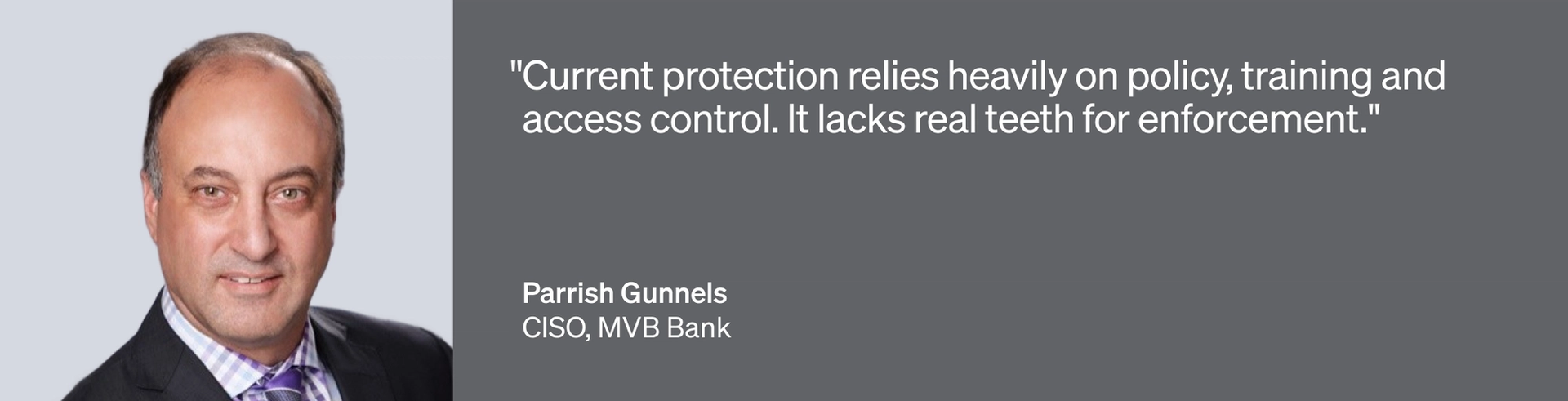

What breaks is execution. The real-time mechanisms to apply those rules don't exist in most environments. As one CISO in our research put it, their team could clearly define acceptable AI use, but struggled to enforce those rules once real interactions began.

With good policies defined, the question becomes whether it can actually run at the speed AI moves.

Why do traditional security controls fall short for AI?

Most security controls were built for checkpoints. A user logs in, opens a file or makes a request. A control evaluates the action. There's a clean moment to step in.

AI removes those moments. Once connected, it accesses and transforms data continuously. Decisions happen inside the flow, not at the edge.

The implication is uncomfortable. Data can be summarized, combined or exposed before any control has a chance to act. Visibility shows you what happened. It doesn't stop it from happening.

Can human-driven enforcement keep up with AI?

When technology systems can't enforce policy, people and process are asked to fill the gap. Training increases. Guidelines expand. Teams rely on users to make the right call in the moment.

That model struggles with AI. Interactions are faster, context is less visible and downstream impact is harder to spot. Even well-intentioned users can't consistently apply policy they can't see.

Several CISOs in the research called this "policy by hope." It works until it doesn't, and at AI speed, failure happens fast.

How often does this enforcement gap actually hit?

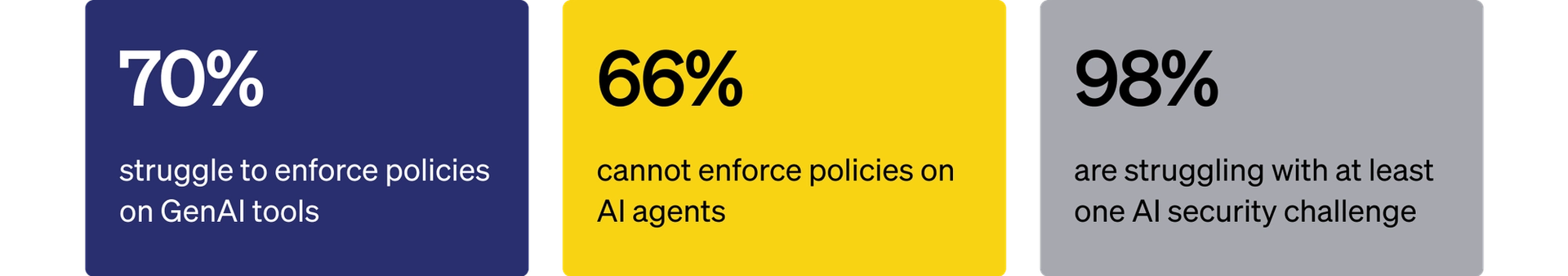

This isn't an edge case. According to MIND's research report, The Impact of Data Trust on AI Success, 70% of organizations report difficulty enforcing policies on GenAI tools. That's a broad operational reality, not a niche concern.

As AI becomes part of daily workflows, the gap moves from theoretical to constant. Data gets accessed more often, across more systems, without clear control points. Investigating incidents after the fact becomes a pattern many companies live with.

Security teams can explain what happened. What they can't do is consistently prevent it in real time.

What does data security at AI speed actually require?

Closing this gap takes a different approach to enforcement. Access controls aren't enough anymore. You have to govern data the moment it's used, not after.

Enforcement has to run continuously and in context, without waiting for a human to decide. It has to operate at the speed of the systems it's governing.

This is where MIND focuses. Stress-Free DLP doesn't add more policy. It makes the policy you already have enforceable in real time, across endpoints, SaaS and AI. We aren't just stopping violations. We're minding the moments where AI quietly turns visibility into exposure, so your team can act on what matters and ignore what doesn't.

How are CISOs closing the AI enforcement gap today?

This is one finding from a larger pattern in the research. CISOs aren't questioning whether AI gets adopted. They're trying to control it without slowing the business down.

The full report goes deeper: where enforcement is breaking inside real environments, which controls are actually holding up in production today and how high-performing teams are closing the gap. It includes direct quotes from CISOs and the specific approaches they've put in place.

Read The Impact of Data Trust on AI Success to see what's working, what isn't and where to invest first.

Data Trust + AI Success Blog Series

- Why seeing AI risk isn’t enough to protect you from it

- Security by obscurity just died. AI killed it.

- Why AI doesn’t behave like a human

- Why most AI projects are failing