When Microsoft confirmed that a bug allowed Copilot to surface and summarize emails marked confidential despite existing DLP controls, it reignited urgent questions about Microsoft Copilot security, DLP bypass risk and enterprise AI data protection. The reaction was immediate.

For many CISOs and security leaders responsible for Microsoft 365 security and AI risk management, it was a recognition of some exposure risks they were very much aware of.

AI assistants like Microsoft Copilot do not invent risk. They illuminate it. In this case, the Copilot DLP bypass highlighted a deeper enterprise AI security issue: data trust across Microsoft 365 and SaaS environments is not where it needs to be.

Copilot is easy to adopt. It integrates seamlessly into the Microsoft ecosystem. It promises productivity gains without heavy infrastructure changes. For organizations under pressure to move quickly into an AI-enabled future, enabling it feels natural and even necessary.

The controls were in place. The Microsoft 365 tenant was secured. DLP policies were configured.

Yet speed has a cost.

In the rush to capture AI value, many teams did not fully evaluate how sensitive data was classified, how permissions had expanded over time or how enforcement behaved under new AI-driven access patterns. What this moment exposed was not a single bug, but a broader condition: structural data fragility.

Over years, sensitive data sprawl, permission creep and incomplete classification quietly compound. The environment remains operational. Compliance boxes are checked. But structural risk accumulates beneath the surface, largely invisible until AI begins operating across it at scale.

How did the Microsoft Copilot DLP bypass reveal a data trust gap?

AI operates at machine speed. It searches, correlates and summarizes across vast volumes of content in seconds. If sensitive information is accessible within the environment, it will surface.

The core issue is not simply that Copilot bypassed DLP controls. The deeper issue is that protection depends on trust in your data foundation. Trust that you know what is sensitive, that it is accurately classified and that policies are enforced consistently across the estate.

Without that trust, controls become fragile.

For years, unstructured data has multiplied across cloud drives, SaaS, collaboration platforms and email systems. Files were shared. Access was granted. Exceptions were made. Policies were written but rarely continuously validated.

AI did not create this complexity. It revealed it.

Is Microsoft ecosystem security enough without a strong data foundation?

Many leaders assumed that operating inside a trusted vendor ecosystem meant they were inherently protected. Platform security is critical, but it cannot compensate for incomplete visibility into your own data landscape. AI exposes the difference between compliance and confidence.

Compliance asks, "Do we have DLP rules?"

Confidence states, "We truly understand what matters across our data estate."

Data trust means:

- Continuously discovering sensitive data wherever it lives

- Accurately classifying it with business context

- Monitoring how it is accessed and used

- Detecting anomalous behavior in real time

- Remediating risk automatically and consistently

- Preventing data leaks and enforcing policies without relying on manual intervention

When these elements operate together, AI becomes an accelerator. When they do not, AI becomes a magnifier of existing gaps.

How can organizations build data trust and achieve DLP at AI speed?

The Copilot story should not drive panic. It should drive reflection and action.

Organizations deserve to securely innovate at the speed of AI.

Data trust is not a feature, it's an architectural commitment. It requires moving beyond static rules and fragmented tools toward a unified approach that continuously understands, prioritizes and protects what matters most.

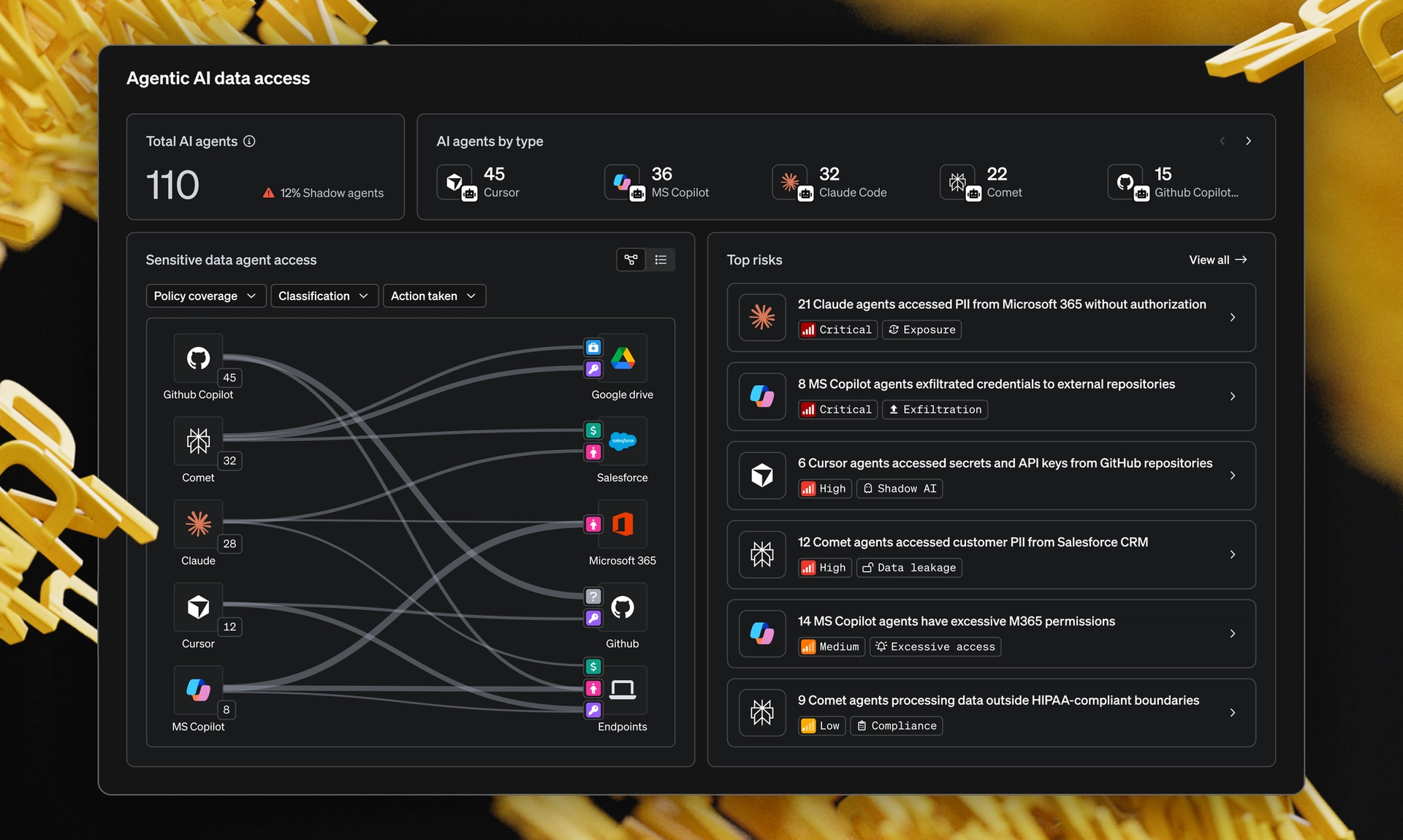

This is where modern, AI-native data security platforms like MIND play a critical role.

MIND was built to establish data trust at scale and deliver Stress-Free DLP. It continuously discovers sensitive data and enables on-demand classification across SaaS, on-premise file shares, endpoints, emails and emerging AI tools, so protection keeps pace with change. It applies context-aware detection, prioritizes real risk and automates remediation to reduce noise and remove manual toil. The result is DLP on Autopilot, an intelligent system that understands your business and protects what matters without slowing it down.

When data trust is embedded across your environment, AI initiatives are no longer a source of uncertainty and exposure risk. They become sustainable, measurable and defensible.

Security and AI innovation do not have to compete.

But without data trust, they will.

The future of enterprise AI security will belong to organizations that move fast, thoughtfully, with clarity about what matters and confidence in how Microsoft Copilot, DLP controls and data trust are continuously protected.