Transcript

Samuel Hill

Hello and welcome to Mind What Matters, the show where data security meets real talk. We're here to unpack big stories, interesting things, hidden risks and all of the curious moments from the world of cybersecurity. And gosh darn it, we're going to try and have some fun while we do it. So whether you're protecting data in the boardroom or just on your phone, we're here to help you mind what matters.

My name is Samuel Hill, joined as always by my partner in crime, the one that I would absolutely give my phone call to if I was arrested, Landen Brown. Landen, how are you today, bud?

Landen Brown

I'm good, Sam. How are you? It's almost Thanksgiving, we're preparing for the holidays. How's your family? Does your family have traditions like mine does? Do you kind of get ready for the holidays? Is your Christmas tree up directly after Halloween like mine is?

Samuel Hill

You know, Landen, it's funny you ask that because I am typically staunchly in the camp of, we must get through Thanksgiving before the Christmas decorations come up. I love Thanksgiving. It is one of my absolute favorite holidays in the U.S. holiday calendar. It's just such a great time.

Now, I made the mistake, my wife had bought a new holiday doormat at a store. It was in the van and I just threw it in front of our front door. So my wife saw me do that and thought to herself, well, game on, I guess we're decorating early. So now, Landen, my Christmas lights are up. All of the holiday bins have been removed from the crawl space storage. And so yes, Christmas decorating is happening a lot earlier this year than I normally would want it to.

Landen Brown

That's okay. I can somewhat sympathize with you. I have five children, for those of you that have never met me. And when you're desperately looking for activities that can entertain all five children and Christmas time is around the corner, decorating the tree can take a long time for five children. And so we tend to start opening that up probably earlier in the year than usual.

But I'm not really one to complain about the Thanksgiving timeframe. We lived in Virginia for a long time and the reason we moved back to Idaho was to have Thanksgiving with my mother, Mama Brown, who makes, arguably, and I'll die on a hill, one of the best Thanksgiving dinners out there. So part of the only reason we're in Idaho now is because of it. So I guess, who am I to complain? The kids are happy, we're getting fed well and we're going to have a good holiday season.

Samuel Hill

You know, if there's a hill to die on, it's grandma's Thanksgiving meal. I feel like that's a worthy hill to die on, that it's good and delicious.

Now I have kind of a hot take for you. I'm curious your opinion here. I do not like the Thanksgiving turkey. I'm not a fan.

Landen Brown

You know, you're not alone. It's the one food on Thanksgiving that I actually refuse to eat, turkey. And I've had it every which way. I've had it deep fried, I've had it smoked, I've had it just in the oven. Not a fan.

Instead, we are a ham family for Thanksgiving.

Samuel Hill

Okay. And the other thing I'm not a fan of on Thanksgiving is the Dallas Cowboys still playing football on Thanksgiving Day. Why? Why are we still subjecting ourselves to this torture? I don't know.

Well, Landen, let's dive into some stories that we're talking about this week as we Mind the Headlines.

And this week, my first one that I wanted to share with you, gosh, do you want to feel old for just a second? I saw this come across and I was thinking, man, is it really? Are you serious? Apple, the iconic Apple company, is turning 50 years old. Landen, how did this happen?

Landen Brown

That's insane, right? Because you still remember the years in which not just Apple was a new company, but when Steve Jobs was young. And we watched him get older and get sick. And we watched the change of Apple after that, which seems like yesterday.

I mean, Apple's been around long before just the iPhone, but the iPhone seems like it was just yesterday when in reality it was almost 20 years ago just by itself, right?

Samuel Hill

Yeah, I think my first modern Apple product was an iPod Touch. Do you remember that? The iPod Touch was like the precursor form factor to the iPhone, but it was just the iPod.

And I remember the iPhones were just so exorbitantly expensive, or it just wasn't right for me to have an iPhone. And I remember my first was an iPhone 3 that I got as part of a company that I worked at. We had a company phone distribution thing, and so I got an iPhone 3 and I thought heaven had descended to earth finally. Do you remember?

Landen Brown

You know, I actually have a conspiracy that's somewhat similar to this, Samuel, that pockets today aren't made as strong as pockets used to be back in the early 2000s. Because when I was a child, I had one of those massive iPod Touches that was a brick. I mean, to fit it in your pocket, today's pockets would just fall right down to the floor.

Back then, our jeans pockets must have been made differently because it was about 10 times the weight of my phone that's here on my desk.

Samuel Hill

Yeah, but somehow the clothes were built to handle it then. Obviously Apple has changed the world, including our fashion. Maybe not for the better, I don't know. We'll find out about that.

But Apple turns 50. Recording this podcast at almost exclusively Apple gear in my little home office here, it's quite remarkable. But who knows what our kids will be telling us is weird when it turns 50.

Samuel Hill

All right, this next story I wanted to bring up, and Landen, you sent this over to me. It's about, I read the email, they sent me a screenshot of the email that they sent out, but the University of Pennsylvania had a cybersecurity incident where the bad guys got into the email systems and decided to send a very choice email to some of their donors and supporters.

Landen, why was this interesting to you? Why did you send this across? What's going on?

Landen Brown

You know, I think there's still some investigation to be done. I do want to make that clear. It's not quite known yet, at least from the information that's been released, whether this was actually an internal disgruntled employee or whether this was an external malicious actor that had access to all kinds of internal tooling.

I think regardless, what it is highlighting for me is, one, the email itself is hilariously insulting if you have a chance to read it. But it also highlights how intertwined insider threat and DLP are in an organization.

And I think commonly, where we see a lot of education customers, their education organizations are either not being able to or just choosing not to spend enough on technology and security technology to prevent these kinds of things from happening.

And unfortunately, in this case, what we saw was somebody get access to the system, send an email out to everybody calling them a few derogatory terms, I would say.

Samuel Hill

Let's just say this email is not repeatable on this podcast. We'd like to stay in good graces with the leaders here.

Landen Brown

That's right. But it does point out that insider threat or insider risk and data loss prevention are intertwined more than ever nowadays, especially with the rise of AI. And this isn't even a use case related to that.

But we're also seeing some areas where this may have legal fallout for Penn, where they're pointing out, hey, we're breaking laws like FERPA. We're breaking laws tied to Supreme Court filings, things that these potential alleged hackers have access to that they may also release to the broader public.

Samuel Hill

Yeah, that's really the risk here, right? If they're found to be negligent with either a FERPA violation or against some of the things the Supreme Court has said, that's the big issue. I mean, if I'm a donor at the University of Pennsylvania and I receive an email like that, I think it's pretty clear what's going on. It's not going to change my opinion one way or another, whether I would give money or not give money to my alma mater, which I am not a Penn State grad or a University of Pennsylvania grad, so it's neither here nor there.

However, the real issue here is where are they also at risk in their organization? What data is also exposed? This email is almost like a red herring in some ways. The real risk is probably still hiding either in the legal proceedings or in access that they currently have.

Landen Brown

Yeah, I think it's one of the things that we always tend to forget.

I remember, probably back in the 2017 to 2020 or 2021 era, the big focus in cybersecurity and cyber defense was to stop APTs. It was to track the TTPs of APTs as they progress and evolve through an organization through multilayered defense and all the things that are still true and relevant today.

I think the difference is, today, a lot of attackers don't come in to destroy, they come in to watch and steal. And so if an attacker is letting you know that they're there, then they've probably already gotten what they need to get you in some very serious ways.

Samuel Hill

That's the tip of the iceberg. If they're sending emails, there's already a lot going on there.

All right. Hey, so here's one. File this under did not see this one coming at all. My goodness. Who could have possibly foreseen this becoming a problem in our industry? But ChatGPT seems to be having some problems with people doing some prompt injection attacks. Wow. Didn't see that one coming. Who would have thought that a ChatGPT prompt could be hijacked and malicious executables could be injected into it?

Landen, could you explain what a prompt injection is? Why is this worrisome? Why is everyone talking about it? Should we continue talking about this? Your reaction.

Landen Brown

Yeah, you know, I may stand alone a little bit in this opinion, but I guess if we were to cover what a prompt injection is first, a prompt injection is ultimately a way of me, as a human, injecting some kind of subliminal instruction into some kind of LLM to get the LLM to do what I want.

Now, one of the challenges with LLMs, even for companies as large as OpenAI and Perplexity and the rest, is that in order for us to have control over a large language model that's using some kind of generation of text based off the models and weights that it has, I have to have some way to administratively give safety guidelines and instructions to the LLM.

Because at the end of the day, the LLM is just a big math equation that says, based off the weights and the order of the words you gave me, I'm going to give you something that matches what I think the weights and the order of words should be back.

And so the LLM doesn't even really know what it's doing at the end of the day. You have to have these baked instructions to really talk with it and limit it and give it safety guardrails and all of these other things.

The challenge is that these safety guardrails keep getting found in interesting ways. And LLMs, because they're stacking the weighting of what word is next and what word is before and the probability and all of that, it actually seems to me that the prompt injection method is always going to persist. It's always going to be a problem.

And I think it's really one of those areas where we'll see some interesting innovation over the next couple of years. So I think it's also one of those areas where we go back to the foundations. We go back to, hey, I could never build a web page or a web form without input validation.

And this is really what MIT has talked about in a similar sense of agent consensus. Before I let my primary LLM operate and return something back to you, can I have my other agents, maybe my other LLMs, cross-check what we're producing before we give something back? The question then becomes, can those also suffer from prompt injection throughout that chain of reasoning?

Samuel Hill

And so, it really comes down to how susceptible they are to these erroneous instructions or guardrails being injected into their process and into their math equation.

Obviously, it makes a lot of sense that we would try and find some ways to steer the math equation. There are some things about the universe that we kind of know to be true, like the rate of gravity or the speed of light or things like that. So some guardrails are generally good.

But again, file this under no one saw this coming, that people would try and break the guardrails or inject malicious things into ChatGPT.

Now the issue becomes, because this is not just a ChatGPT or an OpenAI problem, of course. It's every large language model that's out there in a public-facing way. And now they're being so interconnected, especially into companies that are using these tools to connect them either to their data sources to make sense of things or to find some way to have a competitive advantage based off the speed, function and scale of an artificial intelligence model that can parse large quantities of data quickly.

Now, if we have a prompt injection that gets access to whatever your LLM is using and that has access to your sensitive data, now we're putting data security at risk because of an injected attack.

Landen Brown

Yeah, I think it's entirely possible. I think this is one of the reasons why more organizations are looking to adopt third-party and proprietary LLMs, and not just the LLM itself, but also their platforms. Because I think there is a more strict security approach for these platforms.

The challenge then is it still all connects back to our data, which means our data has to move. Something has to read it. It can't just read it where it's at, or it has to read it where it's at. And it really is all of these other concerns that we should be able to answer to our customers, which is where is my data? Who has access to it? Where is it going? And can we prove what the third parties are doing with it once they have it as well?

And I think this particularly, not just for data security, but becomes a big issue in the future where we're starting to see these claims that there's going to be no way to differentiate either legally or administratively the actions of an agent versus a human.

An example being, if I have a Shopify account that's going to make orders on Amazon, there's no difference between that agent making that order on Amazon versus me. According to the acceptable use policy in Amazon, you could try to make an argument. But people have already won cases against Amazon saying my agentic AI is a person. It is a thing that is allowed to go and do this and reason just as I would.

And so we're starting to get into these areas where organizations are adopting agentic AI not just to use as an LLM here and there to grab data, but also to enact changes, to kind of set up the next wave of having an agentic workforce.

I think the challenging thing there is, even today with the most advanced up-to-date models now, we're still seeing agents completely blue-screen boxes when asking them to rename a file. And so there's a big danger here that is not just data being accessed when it shouldn't, but also the destruction of data, which is the inverse problem of what we were just talking about earlier, where data theft is now the problem of an APT. And now agents are reintroducing this data-destruction chain that we have going on.

Samuel Hill

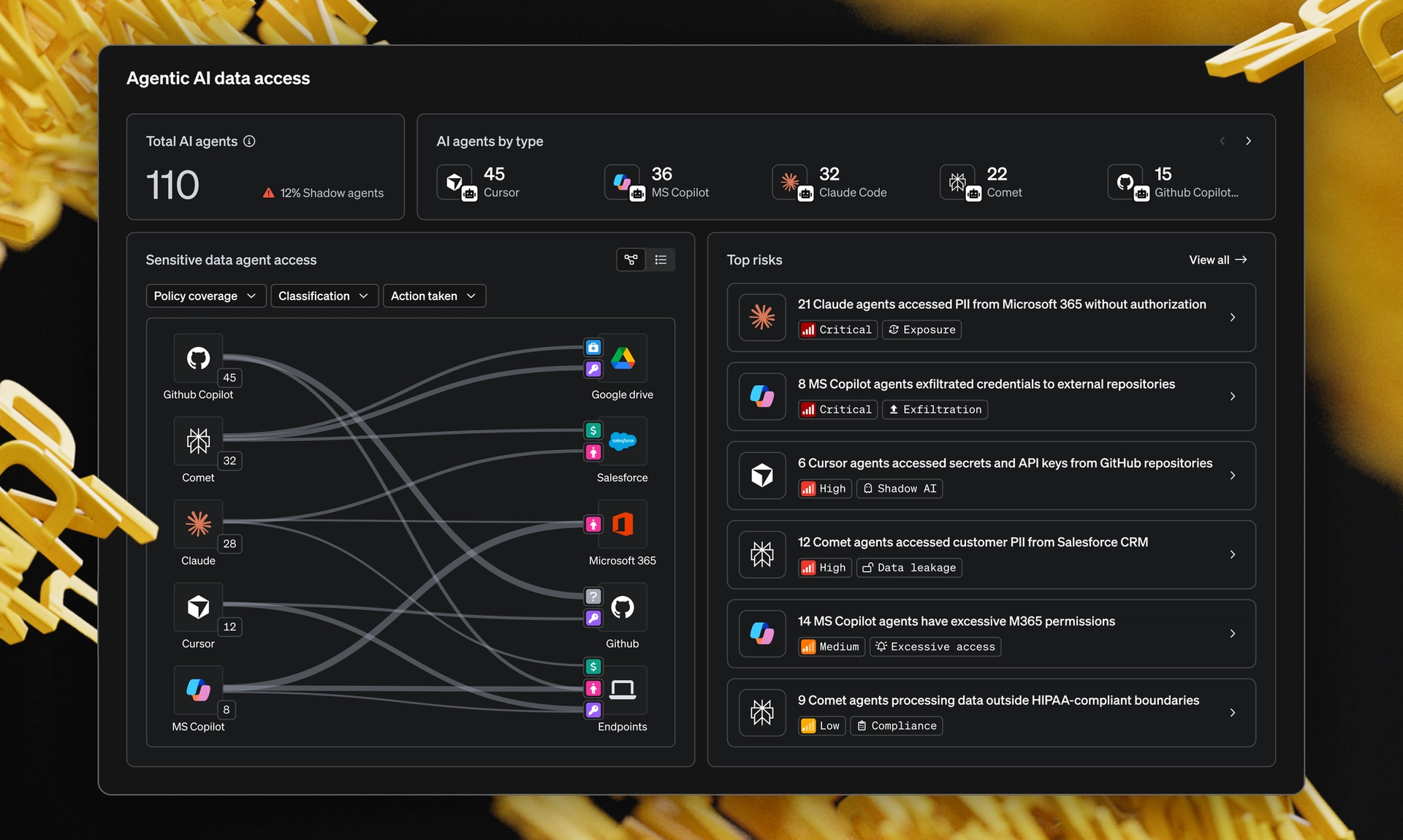

Well, that's going to bring us to today's deep dive. And so we're going to talk about What Matters Now.

We're going to focus on this one big topic around the idea of, so you're talking about the agentic AI acting as a person. It's a non-human identity, a person now, but it has privilege to do certain things. And you and I were chatting off-camera before we started.

Really, this is coming down to the idea of converging your data security, your DLP programs, with your insider risk, and now adding the flavor of agentic AI, non-human identities who have privilege and authority to act on behalf of a business process or yourself, if you're a human, to do something that you've approved them to do.

How do we converge these two systems for best effect? And why don't you explain some of these topics to us, Landen?

Landen Brown

Yeah, it's tough. And I think it would be wrong for any one person or company to say that they have all the answers. So what I can do is speak from my experience and what we see, what our customers have struggled with, what I struggled with at the agency and what I've struggled with at other companies.

There's obviously these two different perspectives on what we're trying to keep safe and how we're trying to do it. One is data loss prevention. It's the ability to understand where data is and stop it from going from A to B.

The other side is we have insider risk or IRM tools. You have DTEX, you have RedVector, you have Gurucul, you have these other solutions that are just focused on what is the user doing. It doesn't really have a whole lot of context around data, but it knows if they're on a PIP and it knows if they have done these specific actions in the past 30 days, and we're pumping that out to a SIEM. And so we're trying to correlate what's my risk score and then correlate that with what's going on in DLP.

The challenge is everything is becoming a little bit more coalesced. We're seeing it from data at rest perspectives into data in motion. Now we're seeing it in insider threat. We're seeing non-human identities starting to be considered or treated as human identities in some cases based off some of those other activities with Amazon and the shop accounts that we've talked about.

And so we're starting to see this consolidation of technology, which is really just the desire for consolidation of perspective. I need to understand how identities are operating. It's no longer enough to just understand the identity perspective. Is the identity doing things that should have greater weights against them, like being on a PIP, like being on PTO, like impossible travel? But also, are they touching data? And it's no longer good enough to just say, are they touching data? We have to be able to answer, are they touching the right data or the wrong data? And if they are doing that, are they sending data elsewhere? And not just elsewhere, but the right data elsewhere or the wrong data elsewhere?

And so there's this layered set of questions that we now have to ask. And the more we build agentic applications and the more we enable our workforce to adopt generative AI, the faster they're working, whether that's they complete eight hours of work in two hours or they're completing 24 hours of work in eight hours instead.

We still have these questions that we're going to have to ask at an increasingly fast scale. And so we're just trying to keep up.

And so when we look at this coalescing of technology, I really think it's from the desire for security practitioners to have a consolidation of perspective in this regard.

Samuel Hill

Well, it kind of sounds like there's an issue of trust. That's what it really comes down to in a lot of ways, in my opinion, right? How much do you trust the person? And it may be very little. Maybe you're a dyed-in-the-wool zero-trust person and you have been trying to achieve that for many years, and good luck to you.

So how do we apply trust to this non-human identity, to this agentic thing, when you want to be able to track their activity? It just changes the paradigm.

You could say there's no way Landen can travel from Idaho to Singapore within three hours. It's not physically possible. Landen can have very specific controls based on his role. But these agentic things are blurring the lines between roles and trust and access. They're digital and they're not physical, so they're not subject to the same rules. And I feel like this is really throwing a wrench into everything.

Landen Brown

It has. And there are some really interesting arguments on both sides.

On one side, you have one group of people that are really adamant, and they're saying, hey, you can't expect me to trust agentic AI like a person. I will always put it in this box. It will never get out. I don't care if there's efficiency to be had.

On the other side of the fence, you have people that are very adamant that, hey, I can't trust my tenured employee to write an email better than this, to handle an incident response case better than this, to be able to work faster than this. Ninety-nine times out of a hundred, it's way more accurate than the employees that I'm paying as well. So why would I not trust this the same way?

At the end of the day, part of their argument is also that I can't trust what Bob in accounting does when he goes home from work, and I can't trust what he's doing internally anyway. I can control what an LLM does to a greater extent in some cases.

And so you have these differing perspectives, which is actually really interesting.

A very poor relation to this, Samuel, is when McDonald's started accepting debit cards as a form of payment and not cash. If you go back and watch those videos, people were very split on the topic. Some thought it was the end of the world and the end of financial accountability. Others were saying, this makes my life so much more simple. Why would I not use this? I can offload that accountability to the bank.

Samuel Hill

Now, it's a fantastic perspective because you're right, the line is still to be determined and we're still having to argue it out.

Now, I do think the value in conversations like this, because on one side you have, and this is maybe what I hear a lot more in my online circles that I participate in, it's the AI is the greatest thing that's ever happened and it's the panacea. It's going to make things so much better.

And absolutely, do I use AI tools every single day? You know it, and I'm sure you do as well. And you and I have had conversations about vibe coding certain things, using AI to help us. These are things where it's very possible that it could be better.

Is it yet? I am not fully convinced quite yet. The level of output that I have seen, the quality of output that I have seen, I don't know that I would trust an LLM better than I would trust somebody like you. You know what good looks like because you've been around, you've seen it done once or twice, you've experienced the good and the bad.

Is there a place where we get to the point where that human experience is no longer needed, or will we always need to have some human in the loop to be able to trust AI to do what we want it to do?

Landen Brown

You know, I think one of the fundamental reasons we can't answer this question very well comes from, I think, the overall, and this quote actually comes from the CISO, or he was the CISO at the time, he might still be, at Molina Healthcare.

I was at dinner with him back in, it must have been January or February, and we were on this topic. One of the things that he said was, the reason nobody trusts AI is because they're asking an undeterministic solution to do something deterministic. And this is the first time in tech that we've ever tried to attempt that.

You look at programming, you look at traditional software engineering, me writing a script to do something, computers will only do exactly what you tell them to do. If there's a bug in your code, it's because you told it to do something wrong. Go fix your code. If your application's crashing, it's because you didn't tell it to do something right, so go tell it to do the right thing.

AI has flipped that perspective on its head. And so it's ultimately saying, hey, I want to go do these deterministic things, now I'm going to try to do them undeterministically with an LLM or some kind of agent.

And I think that's really the innovation that we're going to have to see over the next one to five years, how good undeterministic things get at doing deterministic things, or whether the architecture of AI becomes more deterministic in some ways.

There's been a lot of time where the industry has talked about this sense of being multimodal. It was actually, if you go look at the study done for a machine-learning system to play Minecraft, it was the perfect test for early machine learning.

One of the things it tested was, can you be multimodal and reinforce yourself while being multimodal? And what that means is, when we look at Go, the Chinese chess game, that was a single model with one purpose to do one thing.

When you look at the challenges in robotics of getting a robot to walk and do dishes on its own, it can't just have one model because it would have a model for walking, a model for seeing, a model for moving its arms. It needs to be multimodal.

And it goes back to this research they did in Minecraft, of all things, where it says, hey, I have to eat, I have to survive, I have to chop trees, I have to craft things, I have to walk, I have to run, I have to fight. I have to have different models. I need to be multimodal to achieve this.

And we spent a lot of time, and still are as an industry, really trying to figure out how do we create extensible multimodal applications that are fit for the enterprise and scalable and buildable for the enterprise.

So what I'm really curious to see is, do we continue going down the path of multimodal dependency for the enterprise and how we enable AI, or are we going to start to see things become more deterministic as single models but with a super high focus? And I'm not quite sure which one it'll end up being.

Samuel Hill

Well, because I think that's very human when you think about it. It's a very human approach to problem solving.

There are some things that we know to be true, right? Like the speed of light, the gravitational force. I mentioned some of the laws of physics that we've clearly understood.

There are many areas of scientific inquiry where we're only scratching the surface and barely understand. We could not put a lot of confidence or determinism into certain things. And I think that's the beauty of scientific inquiry. We're not certain. We have a hypothesis, we test it out and we make adjustments as we go. And that's a very non-deterministic way of problem solving.

So even in humanity, we look at a problem and there are certain things that I know to be true, but there's a lot of gray area in these non-deterministic things. And as humans, we don't have a great track record historically of dealing well with non-deterministic outcomes or situations where there's gray area, just generally speaking.

And so I guess it's fair to expect AI to maybe take a little bit of time to catch up on that. And perhaps maybe I would trust it less if it started saying deterministically that things are true that we know are a little more gray than an AI program would want to admit.

Landen Brown

It's a great point.

Samuel Hill

Well, that's a fascinating conversation, Landen. I think you and I will get this kicked off again over beers next time that we're together. Maybe we'll leave the cameras off, because I always enjoy talking about this with you.

But it brings us to our last thing today. Landen, what did you learn today?

Landen Brown

I think over the course of our conversation, if I had to passively and in the third person look at it, it would be that humans have trust problems.

And a lot of people try to say that's a bad thing, and I think it's a good thing. I think we should continue questioning the right methodology, not just for AI and multimodal this and multimodal that, but also are we doing things correctly from a DLP perspective, from an insider risk perspective, from a technology perspective, from an education and enablement perspective? What can we do to continue being good leaders in our organizations?

And I think what I learned today is that question is more relevant now than at any other time in history.

Samuel Hill

Well, what I've learned today, Landen, is that in the spirit of Thanksgiving, I am grateful for the severe complexity of the human experience, the human approach to knowledge and learning. And I stand in awe at what has been achieved in the human body, in the human system and in cultures all around the world. It's quite amazing.

So just thinking about it from how we're training AI models as just a very small, limited fraction of what happens naturally, I mean, you and I both have more than a few children, and watching them basically learn, grow and adapt, be non-deterministic across their everyday lived experience, I'm very thankful for that experience and I'm grateful to be a part of it.

So also grateful for you, my friend. Thank you for taking the time today.

And to all of you watching and listening, happy Thanksgiving. We hope it's filled with joyous times around a table full of people you love, with food that you enjoy, as we count our blessings here in the United States.

So for Landen Brown, I am Samuel Hill, and that's all for now.